AI That Understands Your Business

Our AI Reliability gives teams a complete lifecycle platform for building, evaluating, deploying, and continuously improving AI agents, LLMs, and ML models. Closed feedback loops help you optimize prompts, identify best-fit models, protect data integrity, reduce unpredictable output risk, capture real-world user feedback, and use it to improve AI quality.

AI Systems That Get Smarter, Safer, and More Reliable Over Time.

General-purpose AI models do not know your systems, your workflows, or what “normal” looks like for your business. That gray area is where unpredictable behavior can create real reputational risk once AI reaches production. InsightFinder closes the feedback loop between AI development and production by capturing how real users interact with your services, turning those production signals into high-value training data, and using them to continuously improve prompts, models, and behavior. The result is AI that adapts quickly to your business context—getting smarter, safer, and more reliable over time with every real-world interaction.

From Demo-Ready to Production-Reliability, at a Fraction of the Cost.

InsightFinder delivers an end-to-end AI reliability platform built to solve the full lifecycle problem—not just one piece of it. Instead of forcing teams to stitch together fragmented point solutions that break the connection between production and development, InsightFinder provides a cohesive workflow that helps AI systems succeed in the real world, then continuously improve over time. The result is a more reliable rollout, a faster path to value, and a proven alternative to expensive, brittle toolchains.

InsightFinder's AI Observability Platform Features

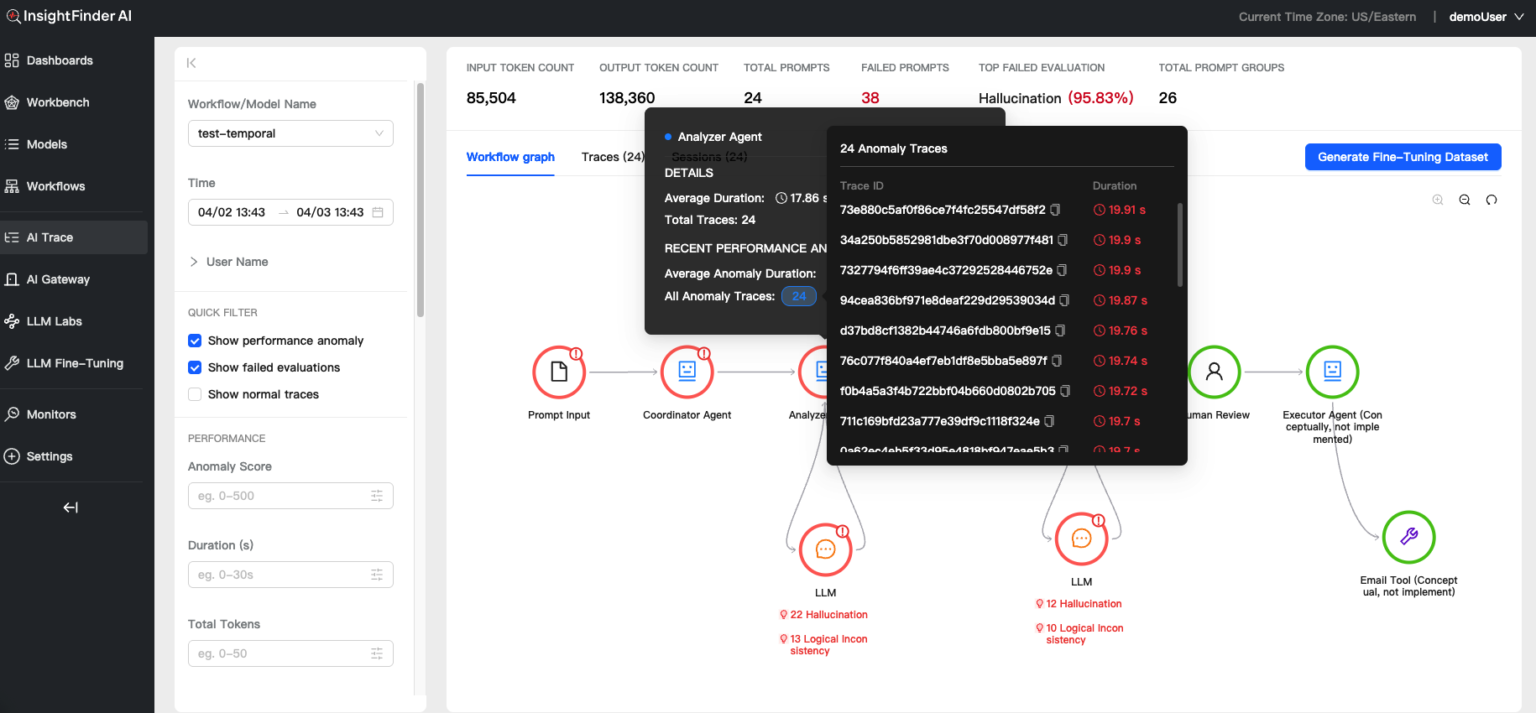

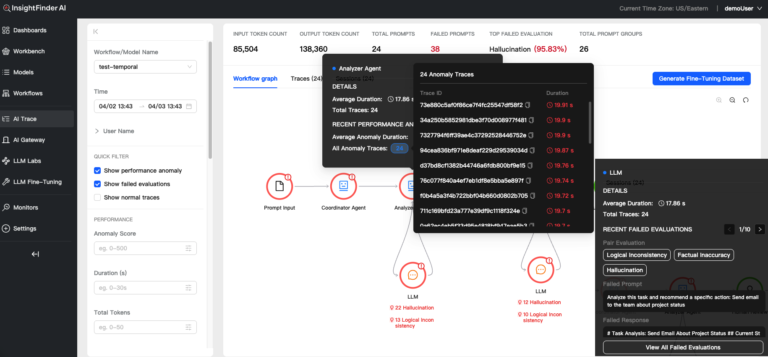

Multi-agent tracing

Gain end-to-end visibility into complex multi-agent AI workflows with distributed tracing built for agentic systems. Trace every step across agents, tools, and handoffs in a single view, while surfacing performance anomalies, token consumption, and failed evaluations in context. See the most common reasons evals fail to pinpoint reliability issues faster and improve agent behavior with less guesswork.

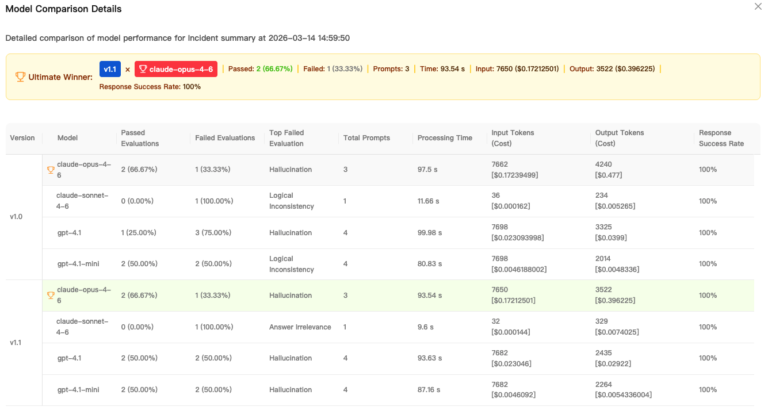

LLM Prompt Comparison

Compare prompt performance across multiple underlying LLMs to find the best fit for your use case. Version prompt packs, run side-by-side tests, and evaluate outcomes across failures, execution time, token cost, and success rates in one place. Choose the right model for each prompt set, optimize quality and cost, and improve results with confidence.

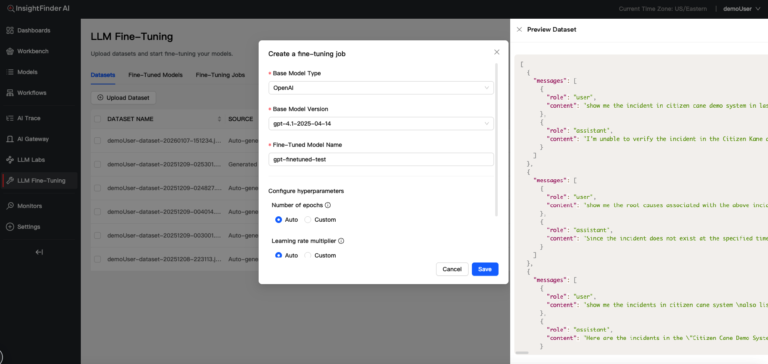

Model Fine Tuning

Turn failed prompts into a continuous improvement loop for your AI systems. Automatically generate training datasets from real-world failures and use them to run reinforcement learning jobs that adapt foundational models into custom models tuned for your domain. Improve model behavior based on production evidence, not guesswork, while accelerating the path to more accurate, reliable outputs.

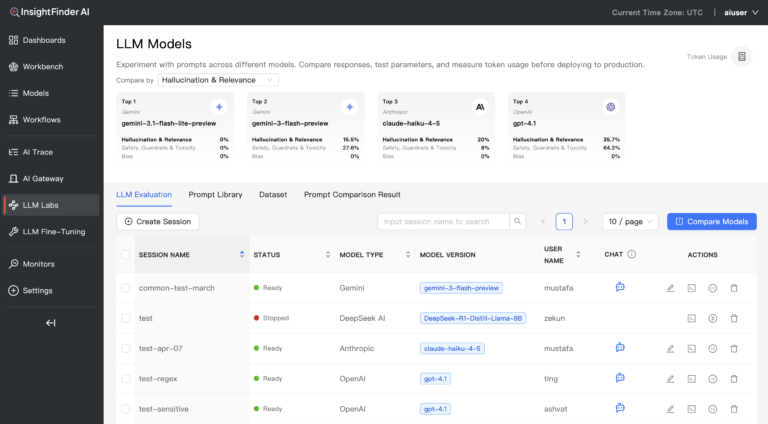

LLM Labs

Evaluate and select the right LLMs for your use case with LLM Labs, a one-stop environment for model testing and comparison. Compare foundational and open-source models side by side, apply guardrails for bias, hallucination, safety, and other quality dimensions to measure how each model performs across the metrics that matter. Make faster, more confident model decisions while improving output quality, reducing risk, and avoiding costly trial and error.

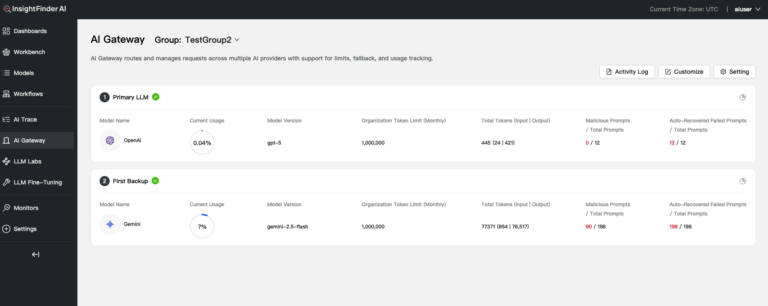

AI Gateway

Deploy and govern AI models in production with an AI Gateway built to balance performance, cost, and control. Route traffic across foundational and open-source models, manage availability, overcome rate limits, and apply continuous trust and safety screenings as requests flow through the system. With built-in open-source LLM hosting, teams can run AI more reliably at scale while maintaining stronger operational and governance guardrails.

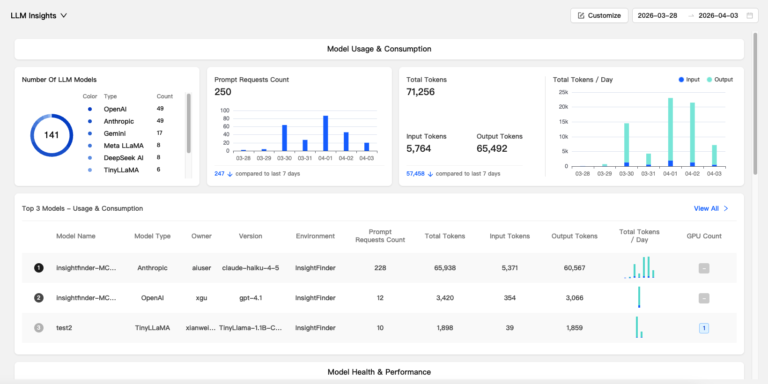

LLM Observability

Manage all your LLMs in one place with observability built for performance, cost, and trust. Track input and output token usage, response times, change events, failed evaluations, and overall model behavior, then use LLM traces to drill into issues at the individual prompt level. With dedicated monitors and workbenches for trust and safety, cost, and performance, teams can detect problems faster, understand model behavior more clearly, and optimize production AI with greater confidence.

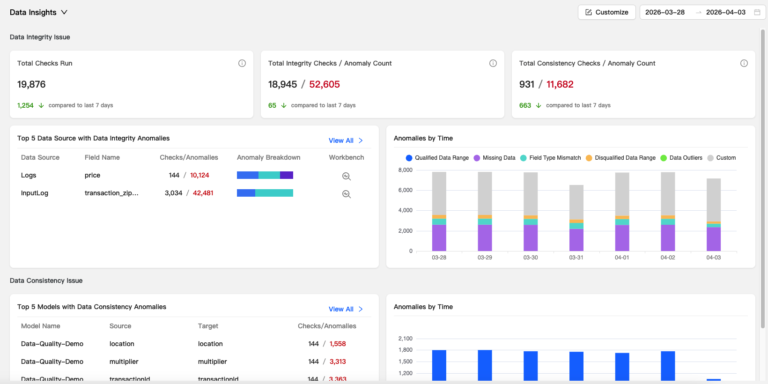

Data Integrity Insights

Monitor data quality with Data Integrity Insights, built to surface anomalies and consistency issues before they impact models or downstream systems. Quickly detect missing data, field type mismatches, outliers, and custom conditions across any dataset, then trace issues back to their source and track trends over time. This helps teams protect data reliability, catch problems earlier, and maintain greater confidence in the systems built on top of that data.

ML Observability

Monitor ML models in production with observability designed to catch drift, bias, and performance risk early. Track data drift, concept drift, and feature-level bias, while using local and global explainability with SHAP values to better understand model behavior and decision drivers. With built-in root cause analysis and auto-remediation for bias, model drift, and data drift, teams can maintain model quality, improve trust, and respond to issues before they impact outcomes.

Key Capabilities of InsightFinder’s AI Observability Platform

Flexible Deployment options

SaaS

On Premise

Co-Pilot

Query and drill into model, LLM, and system data

Troubleshoot models and perform full root cause analysis

Fast Onboarding

Model Management: simple model setup, definition + its associated model data

Integrations: onboard Model Data from Open Telemetry, Elastic, Prometheus, Google BigQuery

Add workbench for each use case in minutes

Model Monitoring

Out-of-the-box monitors for data & model drift, LLM Trust & Safety, LLM performance, model data quality, and more

Automatic detection of model drift, model performance and model accuracy anomalies

Unified observability across LLMs and ML models

IFTracer SDK for collecting streaming prompt data (traces and spans)

Notifications via email for health/performance for each monitor.

Workbench

Analyze anomalies and perform deep-dive analysis

Trace Viewer for inspecting LLM traces with anomaly signals

Prompt Viewer for identifying anomalous or high-risk prompts

Charts with flexible filtering for fast diagnosis

Compare models, anomalies, cost

Timeline view to analyze when anomalies occur, deliver root cause analysis, and morе

Instant workbench creation for each use case

Dashboards

Tailored dashboards for LLM and ML models

Data quality, model drift, total model performance (ML)

Token consumption, malicious prompt identification (LLM), cost

Analyze model drift using PSI or distance metrics

LLM Insights Dashboard for model usage & consumption, model health & performance

LLM Labs

Compare foundational and open-source LLM models

Host open-source models during evaluation

Evaluate hallucination, safety, relevance, and irrelevance

Apply LLM Guardrails and evaluations

Batch prompt processing and A/B testing

Model fine tuning

LLM Gateway

Model resilience – automatic recovery from foundational model outages

Overcome rate limits

Intelligence routing between models based on response time, cost, token limits

LLM Guardrails – continuous safety checks for 15+ measures

Model hosting for production open-source LLMs

Model Context Protocol (MCP) Server

LLMs interact directly with the InsightFinder platform

AI tools tap directly into incidents, log anomalies, and metric anomalies through secure, natural language queries

Easy Integrations

InsightFinder AI’s anomaly detection, root cause analysis, and incident predictions integrate easily into the leading Observability platforms – bringing high-power AI-powered analysis to your existing Observability and Monitoring environment.

-

SageMaker

-

Snowflake

-

Google Big Query

-

Databricks

-

Temporal

-

OpenAI

-

Anthropic

-

Gemini

From the Blog

See how InsightFinder helps your team deliver reliable services across every layer of the stack

Take InsightFinder AI for a no-obligation test drive. We’ll provide you with a detailed report on your outages to uncover what could have been prevented.