AIOps usually enters into team conversations through alert fatigue. Too many pages, too many duplicate incidents, too much manual triage. It’s an understandable starting point because every operations leader has seen teams burn hours sorting noisy signals before they can even begin diagnosis.

But alert reduction isn’t the same as reliability improvement. Many teams discover this after deploying correlation-heavy AIOps and still struggle with slow investigations, uncertain root causes, and escalations that depend on whoever happens to know the system best. The alerts look cleaner, but the incident still feels messy.

That gap sits at the center of our webinar, “Replacing Rule-Based AIOps with Predictive Reliability Workflows.” In it, we make a sharper argument: if AIOps only learns from alerts, it’s learning from evidence that has already been compressed, filtered, and delayed.

Modern reliability needs richer system evidence, not just smarter alert grouping.

Alerts Aren’t Ground Truth

An alert isn’t the system. It’s a conclusion produced by thresholds, routing rules, suppression logic, aggregation windows, and assumptions about what failure should look like. That makes alerts useful for paging humans, but risky as the primary input for operational AI.

The problem is timing and context. Many serious incidents begin as weak signals that don’t trip a threshold. A queue starts growing slowly. A dependency gets a little slower. Retry volume creeps upward. A regional p99 drift stays below the paging line, but customers are already starting to feel the change.

An alert-only AIOps platform can’t recover what it never saw. It can only analyze the signals that existing rules have already interpreted as meaningful events. That means the system inherits the blind spots of the alerting strategy, then tries to reason from an incomplete picture.

For predictive reliability, that’s not enough. AI needs access to the evidence trail before the incident becomes obvious.

Why “Full Coverage” Doesn’t Mean More Telemetry

When teams hear that AIOps needs logs, metrics, traces, topology, and change data, the first reaction is often concern about cost. Fair concern. Nobody wants an observability strategy that turns into skyrocketing bills for unlimited ingestion and retention.

But full coverage doesn’t mean storing everything forever. It means preserving enough evidence to understand what changed, where it propagated, and which response path makes sense. The goal isn’t telemetry accumulation. The goal is faster, more confident incident reasoning.

Metrics show the physics of the system. They reveal pressure, saturation, throughput shifts, backlog growth, and slow-burn degradation. Traces show where time accumulates across service boundaries. They make it easier to see which dependency edge is carrying latency into the customer experience. Logs add semantic detail, including exceptions, timeout behavior, fallback modes, and application-specific clues that metrics can’t explain on their own.

For AI and ML systems, the evidence surface expands further. Reliability issues may come from model drift, retrieval failures, prompt changes, feature pipeline problems, or quality regressions that never show up as classic infrastructure failures. A modern AIOps strategy has to account for that reality.

Incidents Rarely Start Where They’re Detected

Distributed systems often fail through propagation chains. The first meaningful signal may be quiet, while the first obvious alert may appear much later. That’s why alert-only AIOps can make an incident look cleaner without making it easier to solve.

Consider a customer-facing application where a small deployment changes retry behavior. The change doesn’t break anything immediately, so no major alert fires. Over the next hour, retries add pressure to a downstream service. Queue depth grows, latency rises, and upstream services hold resources longer. Eventually, timeouts spike and dashboards light up.

A correlation engine may group the timeouts, latency alarms, and resource saturation alerts into a single incident. That’s useful, but it points the team toward the loudest symptoms at the end of the chain. The better question is: what happened before the incident crossed the paging threshold?

That’s where system evidence matters. If the platform can connect deployment activity, retry growth, queue behavior, trace latency, and logs into a coherent trail, responders get a much better starting point. They’re no longer asking which alert is “real.” They’re testing a plausible explanation.

Correlation Helps, Until It Plateaus

Correlation has a place in operations. Deduplication reduces noise. Symptom grouping makes paging more manageable. Automated incident creation can remove manual ticketing work. These are real gains, especially for teams drowning in fragmented alerts.

The plateau happens when teams need answers that correlation can’t provide. Which signal appeared first? Which symptom is downstream? What evidence supports the recommended root cause? What action is most likely to reduce impact quickly?

Those questions matter because the cost of an incident isn’t measured only in alert volume. It’s measured in recovery time, customer impact, escalation load, and the amount of senior engineering attention consumed by diagnosis. Correlation can help teams hear less noise, but predictive reliability helps them act with more confidence.

That’s the distinction many AIOps tools miss. Reducing the number of alerts is useful. Reducing the time to a credible hypothesis is even more valuable.

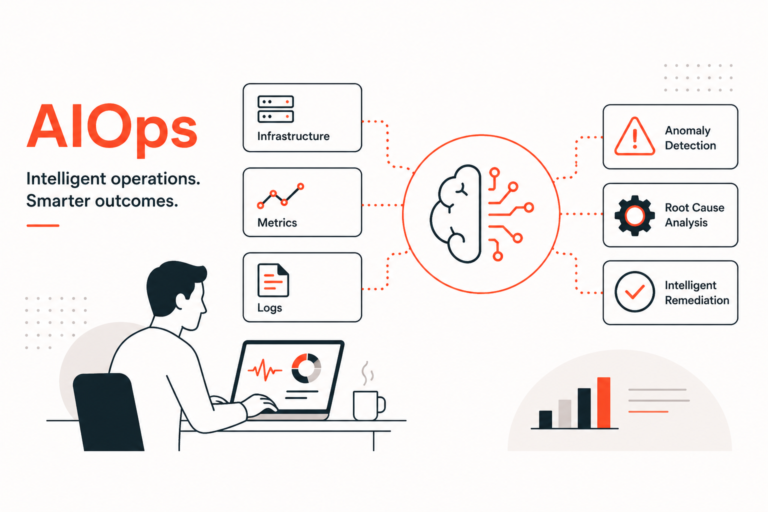

Predictive Reliability Starts With Cross-Domain Evidence

A predictive reliability workflow needs to detect before an alert triggers. That means anomaly detection should operate on raw telemetry and system context, not just event streams. Weak signals often exist in metrics, traces, logs, and topology long before they become pages.

It also needs to produce evidence engineers can inspect. Operational AI has to earn trust during high-pressure moments. A responder is unlikely to act on a black-box claim during a production incident, especially if the recommendation conflicts with intuition or past experience.

The best workflows show their work. They connect the metric shift, the trace path, the log pattern, the topology relationship, and the change event behind the recommendation. That evidence doesn’t remove engineers from the loop. It gives engineers a shorter path to validation.

Finally, predictive reliability must meet teams where they already work. If insight lands in a separate console after responders have moved into Slack, ServiceNow, PagerDuty, Jira, or an incident bridge, it may arrive too late to change behavior. Context has to travel into the workflow, not sit outside it.

How to Evaluate AIOps Platforms

AIOps evaluation often gets trapped in feature checklists. But a better lens is focusing on operational outcomes. Does the platform ingest evidence beyond alerts? Can it detect weak signals before customer impact? Does it explain how symptoms are connected over time? Can responders inspect the evidence behind the recommendation?

Workflow fit deserves the same scrutiny. A platform may have strong analytics and still fail in practice if engineers have to context-switch during an incident. The most useful systems enrich existing response processes with causal context, impact awareness, and recommended next steps.

For executives, this reframes the buying decision. The question isn’t whether the platform can reduce alert volume. The question is whether it can help the organization recover faster, reduce avoidable escalations, and move from reactive firefighting toward predictive reliability. This framing is accepted as industry-best practice.

Most AIOps tools are limited by design. Detection starts too late, sees too little, and often confuses grouped symptoms with diagnostic clarity. That doesn’t mean correlation is useless. It means correlation should be one capability inside a broader reliability workflow, not the foundation of the entire strategy.

Build AIOps on Evidence, Not Alert Artifacts

Modern AIOps needs multi-layer system evidence, early anomaly detection, causal analysis, and delivery inside the incident workflow. That’s the shift behind InsightFinder’s approach to predictive reliability: move beyond alert artifacts and use the system’s own evidence to help teams understand what’s changing before impact spreads.

For more about these challenges, check out our webinar. To evaluate what this looks like in your environment, request a demo and see how InsightFinder brings a more modern take on AIOps to incident prediction, root cause analysis, and operational response.