Resources

Prompt comparison for LLMs with multidimensional evaluation

Most teams don’t just “ship a prompt.” They ship behaviors that must hold up…

Read more

Replacing Rule-Based AIOps with Predictive Reliability Workflows

Less tuning. Earlier signal. Faster triage—built for AI-era production. A lot of “AIOps” is…

Read more

Meet your company’s new HR reps: AI agents

This article was written by Victor Dey and originally posted on FastCo. The tech…

Read more

InsightFinder 2025 Retrospective: From Observability Insights to Operational Actions

In 2025, the reliability bar kept moving forward. Engineering teams shipped more distributed systems,…

Read more

InsightFinder AI Launches ARI, an Operational Reliability Agent Built for the AI Era

This post was originally published on AIthority. ARI (Autonomous Reliability Insights) brings instant root…

Read more

Why Traditional Observability Fails in AI Production (And What to Do Instead)

AI systems are forcing engineering leaders to confront an uncomfortable reality: the observability practices…

Read more

OTel me more about using InsightFinder

Teams often presume that trying a new AI observability tool means re-instrumenting code, swapping…

Read more

Meet ARI: The Operational Reliability Agent

In this walkthrough, we introduce ARI, an AI agent that doesn’t just chat—it works….

Read more

Introducing ARI: InsightFinder’s New Operational Reliability AI Agent

Production reliability is hitting a breaking point. As systems become more distributed and deployments…

Read more

InsightFinder’s Patent for Automated Incident Prevention is Granted

InsightFinder has been granted its automation patent which completes its unique closed-loop reliability platform…

Read more

AI Agents: The New Path Forward and How Reliability Catches Up

AI applications are shifting from “answer engines” to “action engines.” The moment an AI…

Read more

How to Monitor AI Agents: Reliability Challenges and Observability Best Practices

AI agents represent a shift in how language models are used in production. Unlike…

Read more

Beyond Observability: Continuous Improvement Workflows for Production in the AI Era

Meet InsightFinder’s new AI agent, ARI, who helps you understand what’s breaking in your stack:…

Read more

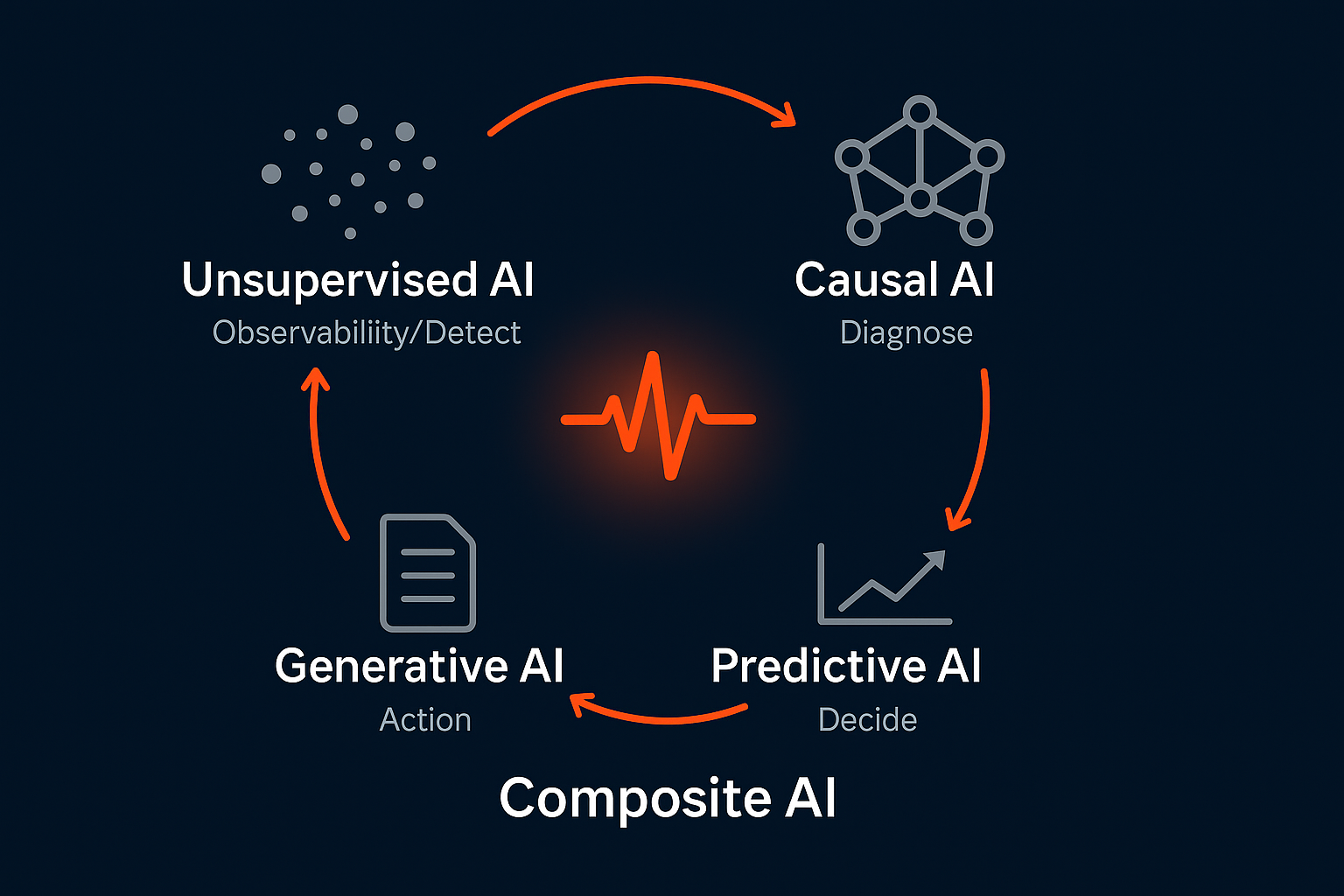

Composite AI for IT Observability: Why Generative AI Alone Is Not Enough

Composite AI refers to the use of multiple AI techniques, such as unsupervised learning,…

Read more

What Is Composite AI? A Practical Guide to Reliable and Trustworthy AI Systems

InsightFinder’s Composite AI blends unsupervised ML, predictive drift modeling, causal dependency mapping, and GenAI summaries into one cohesive reliability engine.

Read moreSee how InsightFinder helps your team deliver reliable services across every layer of the stack

Take InsightFinder AI for a no-obligation test drive. We’ll provide you with a detailed report on your outages to uncover what could have been prevented.